Offloading your media files is a well-known tactic for speeding up your WordPress sites, but WP Offload Media can also greatly reduce the time it takes to replicate a new environment on a staging or development site, as well as the disk space needed to do so.

There’s more than one way to do this, and there is no canonical “best way.” In this article, we’ll look at some of the different strategies you can use, and outline their pros and cons.

The Long Way Around

Let’s say we have a client with a live site at example.com with a media library of over 5,000 images. They’ve asked us to start working on a full redesign. We need to set up a separate environment where we can work safely and the client can review our work before they give us the thumbs up to go live.

We start by deploying the client’s WordPress site to a new server with the domain staging.example.com. If you’re using WP Migrate Pro, you’ll have access to push/pull migrations. Using this, you can move any and all files directly between two WordPress sites, pretty much at will.

Alternatively, we can use WP Migrate Lite to export whatever parts of the client’s site we need to a ZIP file, and then manually unpack everything on our staging site. Note that you can include WordPress core files in this ZIP. This can be very handy when it comes to replicating the environment and its settings that may be present in the site’s wp-config.php.

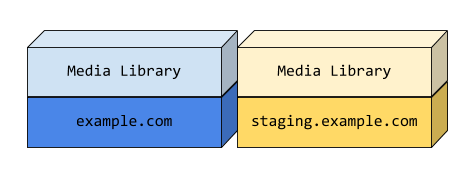

The media library is duplicated in both environments and served on the same domain as the host site.

Next, the three developers working on this project need to repeat the process to set up their local development environments. Later that day, the team is finally ready to get to work.

This method is acceptable for small sites, but it doesn’t scale well and the cost of maintaining it goes up proportionally with the size of the media library. This is particularly painful when you set up a new environment for the first time. A site with a very large media library can take hours or even days to download.

Centralizing the Media Library

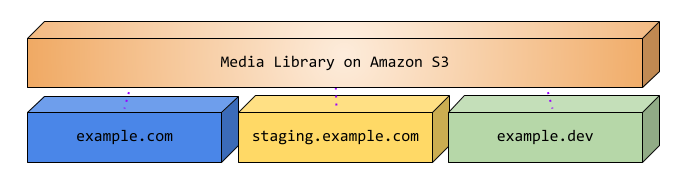

One of the benefits of WP Offload Media is how much it reduces the amount of disk space needed for each environment. Rather than duplicating the media library again and again, it can be relocated to a central location such as an Amazon S3 bucket. From there, it can be shared by multiple environments.

Because WP Offload Media keeps track of the offloaded URL for each media library item, each environment is able to share those assets without having them locally.

Achievement Unlocked: New environments in a fraction of the time!

The Dark Side of Shared Resources

Did you ever share a room with a sibling? Imagine sharing one with your exact clone; nothing you have is safe! The benefits of shared resources come with their own risks, but by understanding them we will be well equipped to avoid them.

In the case of WP Offload Media, the shared resource is the Amazon S3, DigitalOcean Spaces, or Google Cloud Storage bucket. The primary risk of sharing a bucket is that each environment potentially has the ability to upload, remove, or otherwise manipulate the contents of the bucket. Generally speaking, you only want the assets used by production to be modified from production.

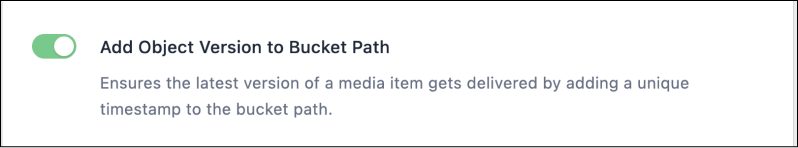

When using WP Offload Media’s recommended settings, you’re actually quite safe from one file overwriting another of the same name, thanks to the “Add Object Version to Bucket Path” setting.

This setting adds a timestamp to the path of the object in your bucket, based on when the image was offloaded. Locally, your file may have a path like this:

wp-content/uploads/2023/08/taylor-swift.png

With “Add Object Version to Bucket Path” enabled, within the bucket the same file would have a path like this:

wp-content/uploads/2023/08/22195347/taylor-swift.png

This gives the file a unique path. The media library helps prevent conflicts as well by enumerating items that would otherwise have the same filename. However, without object versioning there is still some risk that a new item added on the live site could compete for the same path in the bucket with an item of the same name in another environment, overwriting the previous file.

Including the object version in the bucket path makes this nearly impossible, while solving the issue of cache invalidation in the browser and on the CDN.

If you have enabled “Remove Files From Server”, WP Offload Media performs additional checks to make sure that an offloaded file does not overwrite an existing file in your bucket as well. Note that this capability is not offered in WP Offload Media Lite.

Cleaning Up

A lot of temporary data is often added as developers thoroughly test new features. No matter how you’re paying for storage, it isn’t free. There’s no reason to pay to keep a bunch of test junk hanging around once it has outlived its usefulness. In the event that we do want to upload to a bucket for non-production use, we also want to be able to clean up after ourselves easily.

Let’s explore a few strategies with a bit more consideration for the bucket contents and access to it.

Shared Bucket Strategy: No Access

Ideally, we should not be able to remove any assets used by production from a non-production environment. The easiest way to enforce this is to prevent the WP Offload Media plugin on your dev or staging site from accessing your bucket at all. This is as simple as removing the access keys set in the staging site’s wp-config.php file.

If the staging site is a clone of production, this is all that is required. However, if it is not a clone and therefore WP Offload Media’s settings are not available, then setting the region and bucket via the AS3CF_SETTINGS constant in wp-config.php will fully enable the settings screen. New media library items will not be offloaded and will be served from the local server, while existing items continue to be served from your S3 bucket or custom domain.

Pros

- Easiest to implement

- Items cannot be added or removed from the production bucket

- Existing offloaded media can continue to be served from the bucket and CDN

- No added cost

Cons

- New media is served differently than it will be on production

There are times when it may be necessary to test that new assets are being offloaded and URLs are being rewritten properly in a non-production environment. In this case, we need a different strategy.

Shared Bucket Strategy

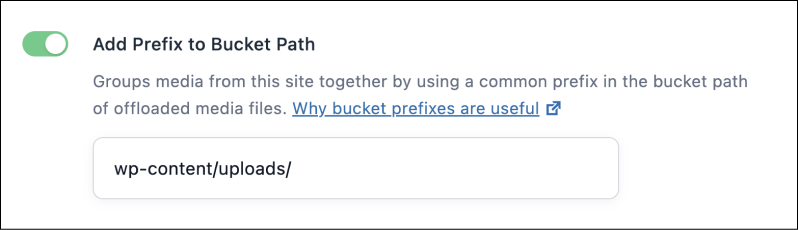

WP Offload Media’s “Add Prefix to Bucket Path” setting defines the base path to the uploads (media library) root directory within the bucket. By default, it mirrors the local filesystem path used by WordPress, but it can be changed to whatever you like.

Because this path is saved for every media library item, we’re free to change it without breaking URLs to existing items. When this setting is changed, the only media library items affected are those items added after the change.

The benefit of this approach is that uploads from other environments are isolated in a separate path in the bucket from those added from production. When you’re done testing, you can simply log into the storage provider’s console and delete the directory containing your custom path to safely remove all of your temporary objects.

It would also be wise to use different credentials, created with a more restrictive policy for your staging/development environments.

Pros

- New media is offloaded and served from your bucket in all environments

- Media added from a non-production environment is isolated in the bucket and easy to clean up later

Cons

- Setup and maintenance of alternate credentials

Alternate Bucket Strategy

We could also change the bucket setting, defining a new bucket to be used by WP Offload Media for new media. Like we saw with the path above, the bucket is also saved to each media library item so only new media is affected by the change of bucket. Since this is essentially the same as the path-based strategy, with all of the same pros and cons, we can move on to our last strategy.

Mirrored Bucket Strategy

Here we will clone the bucket used by production into a new bucket for our non-production environments to share. This is the best option for replicating the production environment as closely as possible, without the risk of contaminating or otherwise messing with the bucket used by production.

As mentioned above, the bucket used is stored in the database for each media library item. In order to ensure WP Offload Media is using the mirrored bucket, a search and replace must be done to the database to change the old bucket name to the new one. This can be done with WP Migrate Pro at migration time, or WP Migrate Lite or WP-CLI after the fact. This can be a challenge in and of itself depending on the bucket name. If the original bucket name is the same as your site’s domain for example, performing a find and replace on your database to change the bucket’s name could have unintended consequences.

Once we have the new bucket created that we want to use, we still need to copy over all of the objects into it from the production bucket. Depending on how large your bucket is, this could take a while if we were to download everything locally and then re-upload it to the new bucket.

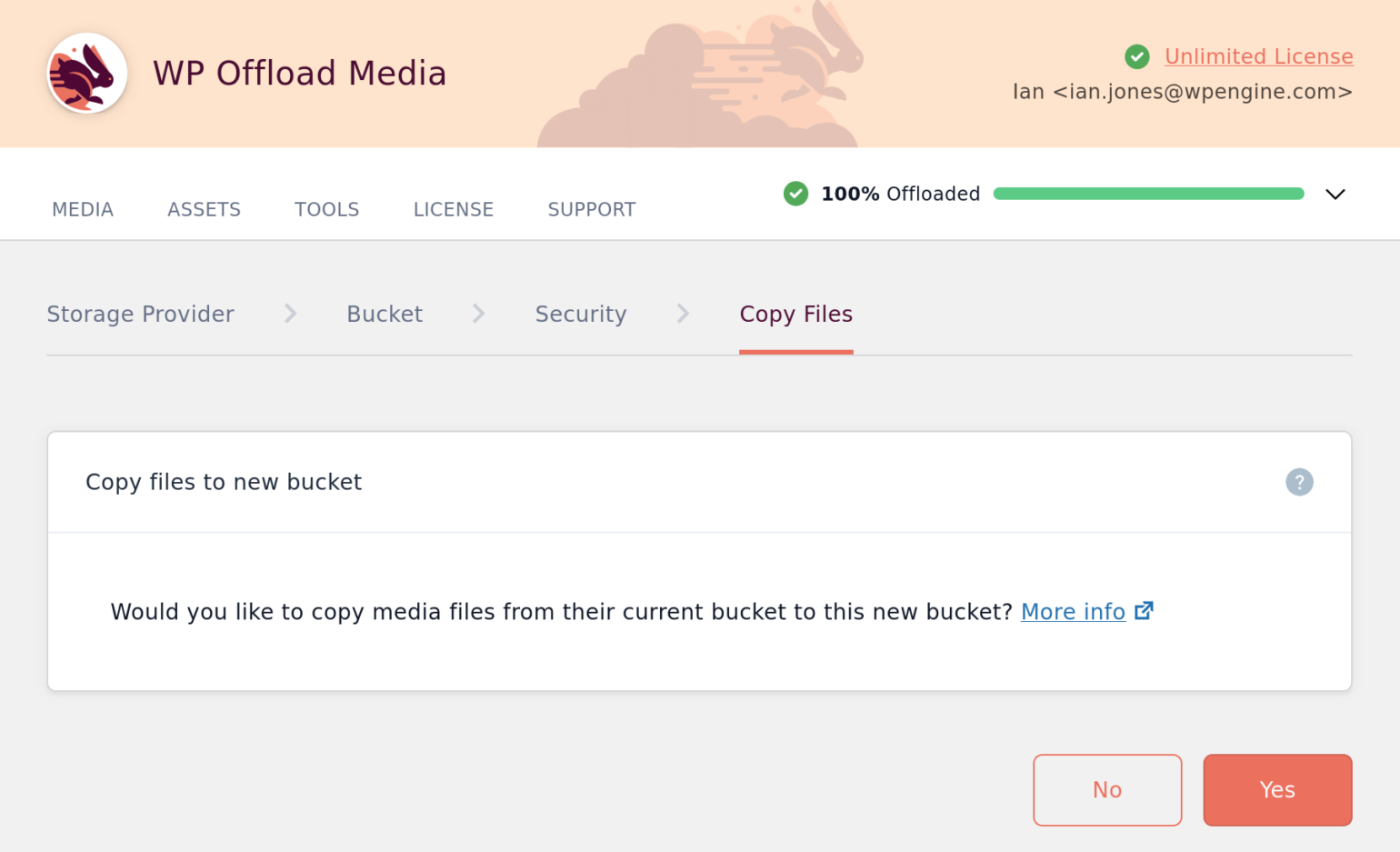

Exactly how you do this with WP Offload Media Lite varies depending on the storage provider. The full version of WP Offload Media can do this directly within the plugin, creating a new bucket from the plugin’s UI and then copying all of its contents.

The process is streamlined and extremely fast. “Copy Files” appears once you’ve selected the new bucket. At this point, all you need to do is click Yes to begin the copying process.

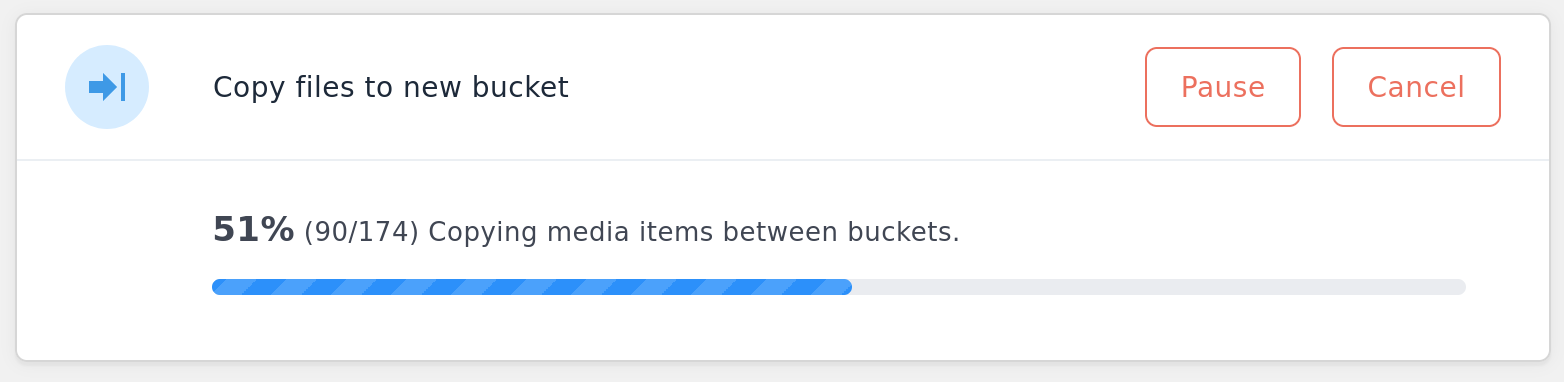

Naturally, the more data in the bucket, the longer the process will take. WP Offload Media keeps you informed of the status while in progress, and notifies you on the plugin’s settings page when it’s complete.

Pros of the Mirrored Bucket Strategy

- All environments serve assets in the same way as production

- All environments can add/remove all objects from their buckets

- Cleaning up later is as easy as deleting the mirrored bucket

Cons of the Mirrored Bucket Strategy

- Most time consuming and potentially difficult to set up

- Paying for duplicate objects

Creating an Environment-Based Configuration

Manually changing settings via the UI every time you refresh your staging database is a recipe for disaster. For example, forgetting to switch a payment gateway to sandbox mode could cause serious problems. Forgetting to swap out credentials could as well. The solution is to include this configuration in the application code.

The basic goal here is to remove the database from the equation for a select few settings and enforce an environment-specific value. To do this, you’ll need to include some extra PHP in the app which is deployed to all environments.

All of WP Offload Media’s settings can be controlled via constants. Any settings defined within these constants take precedence over those stored in the database. The definitions for the constants can be added to wp-config.php, anywhere before wp-settings.php is loaded.

Controlling your settings via constants allows you to ensure the settings only take effect on non-production sites, input alternate/dummy credentials, or simply use a different bucket than the one used on your production site. For more comprehensive information, refer to the WP Offload Media documentation for Settings Constants.

Wrapping Up

Offloading your WordPress media library can help make spinning up new environments quicker and easier. You can protect the media in your production bucket by using alternate credentials for each environment, and enforce those environment-specific settings in your code to avoid mistakes.

Which strategy do you use for using WP Offload Media in different environments? Do you have a creative solution for solving environment-based configuration in WordPress? If so, tell us all about it in the comments below!